Nonresponse bias examples (and how to crush it with better surveys)

Concise and targeted surveys mixed with higher user intent knowledge can help you reduce nonresponse bias and drive better survey results.

Getting accurate survey data is hard — it always has been.

Throughout my start-up career, I've seen first-hand how difficult it can be to get meaningful “n” for surveys.. I know how frustrating it is, particularly for earlier-stage startups who don't have a lot of users, to make a low response rate work.

Even the most "perfect" survey will not have a 100% response rate. It's likely not even over 50%.

That does NOT mean that you should settle for low response rates and insufficient data, however!

You still have a lot of room for improvement on your surveys, particularly in reducing nonresponse bias and improving the depth and accuracy of your results.

Nonresponse bias occurs when specific cohorts of users respond less frequently or passionately to your questions than other users. These individuals fail to respond to your survey, or they skip specific questions within it. This can be for various reasons, but most frequently, poor survey design and limited incentives to respond cause it.

This explains why your surveys will have more respondents at the two poles than in the middle.

People who hate your product or are dissatisfied may respond more frequently (negatively), or those who are power users and lovers of your product may react (positively.)

This is natural, to a degree.

However, when you find consistent underperformance within specific user cohorts or uniquely low response rates to particular questions or types of surveys, you might be facing nonresponse bias.

Why nonresponse bias is bad for business

If you consistently face nonresponse bias, your survey results can lead to distorted data and, most importantly, incorrect product and growth decision-making based on that insufficient data. This is especially true for SaaS companies with fewer traditional ways of measuring user feedback and engagement.

While a big brand like Coca-Cola might spend millions of dollars on focus groups and brand research, getting user intent data and user insights through low-cost means is essential if you're a startup SaaS company.

That's because you can't just ignore surveying.

These days, smart product development always includes some level of surveying. Surveying influences how the product is managed and developed, how teams are built, how resources are allocated, and how the business generally evolves.

You must accurately understand your customer by reducing nonresponse bias and having accurate (or as precise as possible) survey data.

One positive effect of eliminating nonresponse bias is that you gain a much clearer insight into your product's actual pain points and areas for improvement!

Understanding nonresponse bias

There are different kinds of nonresponse bias: generalized and specified.

Generalized nonresponse bias

The first kind is generalized nonresponse bias. This is when folks don't complete your survey. You might pop it up, email them, or have a small dedicated spot in-product. Whatever it is, they do not take the time to answer your question(s.)

First, however, let's clarify: a large portion of your user base will NOT respond to your survey.

The average rate for an NPS survey is 12%, while it can range from 5% to 50% for other types of surveys.

You don't need to get 100% engagement, nor should you think that if you fail to get close to that you are always facing terrible nonresponse bias.

It is important to note that you could miss valuable insights if you consistently miss entire segments of users, be they positive or negative, new users, or specific segments of users.

Item-level nonresponse bias

Another form of nonresponse is specific question-level or item-level nonresponse. This is when folks might answer some of your questions but not all. This leaves you with incomplete data that doesn't give you complete insight into certain aspects of your survey.

This is still a challenge, but it's much easier to fix and something you'd much prefer to face than broad and generalized nonresponse.

Causes of nonresponse bias

Let's walk through some of the main ways nonresponse bias is triggered.

Survey length and complexity

You will have much less participation if your surveys are consistently long, complicated, and demanding. Folks have a limited attention span. They're pulled in every single direction, every day, every minute, so you must keep your surveys manageable.

This can include everything from the length of the survey itself to how you structure questions, but generally, the more straightforward and concise the survey, the better it will be and the more data you'll be able to collect.

Relevance and segmentation

Customers may not see any value or reason to participate in your survey if you're asking questions that aren't relevant to them.

If you have a user in your product who is from HR and you ask them engineering-related questions, they won't have any valuable input. They're not going to respond, and really, you don't want them responding!

It is critical to have accurately segmented and targeted surveys. Folks should only be presented with survey content relevant to their persona.

Targeting and frequency

One of the other significant issues I consistently see with surveys is the targeting and frequency. Some folks send out a survey every day and blow people up, and that's a great way to burn users out and condition them to X out of the nudge.

Another is just timing them incorrectly. There's no point in sending out an in-app survey for two hours overnight, on a holiday, or on a weekend.

You need to understand your users' behavior and time the survey correctly for optimal participation. You must also look at your user base and understand the maximum frequency with which you can layer surveys onto the in-product experience.

Think about this: your surveys are just one in-app message going out.

You've also got nudges related to product releases, bug fixes, promotions, referrals, and so many other things that your team might be pushing out. Your surveys are just one of many. However, unlike the other elements, which are just announcements or in-product CTAs, your survey only has value if you get feedback.

Examples of nonresponse bias in SaaS

Standard feedback surveys

One of the main ways to see nonresponse patterns is through standard feedback surveys. We've all received a billion of these via email or pop-ups.

We're conditioned not to engage unless we have strong positive or negative feelings.

When was the last time you got a random email or feedback from a brand you interacted with six months ago and felt compelled to respond?

When customer feedback is bipolar, you're going to have a lot of folks who are positive and a lot of folks who are negative, and you might have a missing middle n the data. That doesn't mean that the middle isn't there. It just means that you're not capturing it because of nonresponse bias.

So, you want to ensure you have incentives for folks to respond. That could be literal incentives like discounts or credits for participation, or it could be your messaging. Mention why feedback is essential and how it helps build a better experience for that user in the future!

Features and product feedback

Feature and product feedback are other areas where nonresponse bias can be an issue. Suppose you get specific product feedback around new feature requests, development, or fake door testing. In that case, you need to remember that your power users, the ones who are most engaged, will be much more incentivized to give you feedback and help guide the direction of the product.

Generally, this is a good thing! You want to optimize your product to some level for the users who are most engaged, paying the most, and generally in love with it.

Here's the reality, though: If you have many users, especially if you're a larger company, you will have a wide range of intensity levels in use.

You'll have your power users, but you'll also have a large base of users who use the product once a month, a couple of times a month, or once a week.

These folks are paying customers as well.

You shouldn't only build your product roadmap based on what your power users say.

It's like if you were a gym and your most dedicated customers requested more and more racks for squatting, deadlifting, and Olympic lifting. And if you only listened to them, your whole gym might be full of them, only to realize later that the other 90% of your gym users, who use your product much less frequently (but are still paying customers), really just want some light weights and cardio machines.

You want to ensure that your more casual users' needs are not underrepresented. One of the best ways to do this is to ensure you are surveying users in various ways. While your power users are in-app all the time, and you can survey them in-app, getting feedback from those more casual users might take more effort across other channels. Just make sure you target them where you can meet them!

Customer support

Customer support reviews are another big issue.

When your customer support team faces a new ticket, they do their best to provide an excellent resolution.

Sometimes, that happens, and you get positive feedback. Sometimes, you get negative feedback.

“The team was not able to fix my issue.”

“The team was slow.”

“They were rude.”

Or

“They were great!”

While timing is always important to reduce nonresponse bias, it is even more critical with surveys involving customer support.

It's important to measure people not only after a ticket is closed but also those who are still in the process AND to have a mixture of resolved and unresolved tickets so that you're not only getting the after-the-fact answer.

For example, suppose you had someone submit a ticket, and your team was polite, cordial, and timely as they began to work on it and interact with the customer. In that case, you might query that user in the middle of the process about how they felt about the team's level of professionalism.

They might score it a nine or a 10 — great so far!

But let's say that, unfortunately, due to technical issues, what they're trying to do is impossible, and you survey them after the fact.

Despite that, the team's level of engagement and politeness had not changed because their ticket was not resolved overall. Thus, when asked about it, that user might give you a negative rating overall, or not even respond at all!

Keep this in mind as you build your timing strategy!

Onboarding experience

Onboarding is one of the most critical areas to optimize, and much feedback is collected around onboarding experiences.

Was it positive?

Was it negative?

What were the points of friction?

The issue is that you have this dilemma where onboarding usually takes a couple of minutes. If you can get folks to complete onboarding successfully, you can get positive feedback quickly.

But the issue is that you might have some folks who are not successfully onboarded and are never in a position to give you feedback.

Think about it: you might sit down and look at your onboarding numbers and say, great, we had some fall-off, but most of the onboarding feedback is positive.

But you're obviously missing this prominent “lost” segment of folks who tried to go through onboarding but never got into a position to leave feedback, submit a support ticket, or get upset with your team—because they never completed onboarding!

One important thing to do is follow up with users on their onboarding experience, especially if they didn't complete it. You might say, “Hey, we noticed that you started but didn't complete onboarding—can we help?”Getting feedback proactively is super important.

Identifying nonresponse bias

There are a couple of main ways to identify whether you have a normal distribution versus one heavily impacted by nonresponse bias.

One of the first and most effective ways to do this is to properly segment out your user base and compare response rates across those cohorts.

You might segment them by their in-product age. How long have they been using the product? One day, one year, ten years?

Or you might segment by their role.

Or you might segment by their activity: how often are they in the product?

However you choose to segment them, if you can identify specific segments that have uniquely and consistently lower response rates with little other explanation (ensure you have the exact targeting, survey copy, etc.) and yet they still are lower, then you might be facing nonresponse bias and be missing out on valuable data.

Adjust some of your strategies to ensure that these groups perform at par in terms of their response rates. I don't want to suggest that you need complete parity across all of your cohorts; it's just not realistic. But if you see large, significant, and consistent gaps, it's certainly something to look into.

Survey response patterns

You also need to examine your surveys item by item to determine if specific patterns or behaviors indicate users consistently skip over questions.

For example, if you have a three-item survey, all three being optional.

Folks submit answers to questions one and three but not number two. Here, you might want to look at the questions themselves, particularly those with a low response rate (#2), to understand what about the language, style, content, or copy might be causing folks to either skip the question or to fall off of the survey in general.

Or, in another survey, you might be required to provide answers to all questions, and one might be particularly invasive or otherwise challenging to answer. In this case, you might end up losing all the data, and people might churn out the survey.

Always ask yourself: What's the simplest way I can phrase this question for maximum clarity?

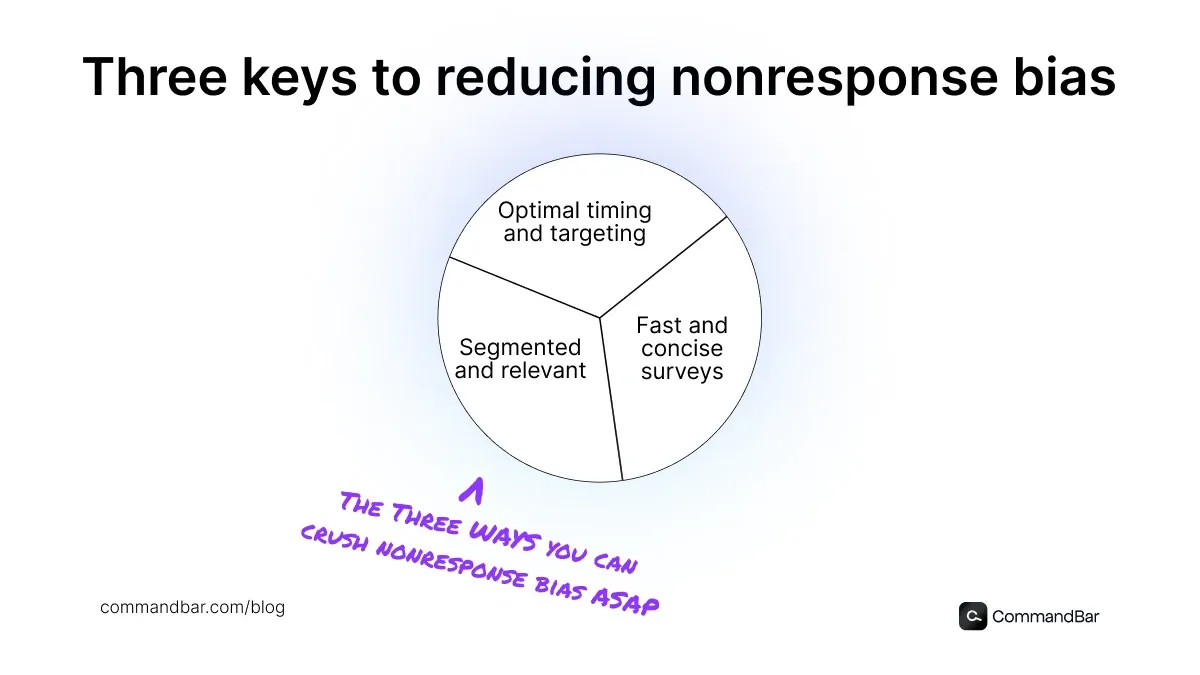

Solving nonresponse bias

The first thing you should do in your general surveying and feedback collection is understand the channels you have at your disposal.

For some small startups, that might just be your product. That could be the only place, along with email, where you can actually reach out to your users. But as you grow, you might have a dedicated Slack channel, phone calls, or video calls with those folks. As you get big, you can do social media polling, brand awareness polling, research sessions, paid user studies, and all of those more advanced ways.

Understand where you can reach your customers, where they prefer to be reached, and where the place with the highest likelihood of getting quality answers from them is. Meet them where they are and ensure that you're putting yourself in a position to succeed by making it as easy as possible for your users to give you feedback.

Follow up!

It's also important to understand how you want to follow up on your surveys. Some surveys will be one-offs where you ask a really simple question. Maybe it's a customer effort score of 1 to 5, or maybe it's NPS feedback that you ask once a quarter. Others will be really important and something that you want to follow up on repeatedly.

Having a clear and structured plan and experimentation mindset around your surveying strategy is essential.

Let's say you run a survey on day one and get a 5% response rate. You might want to run that survey seven days later and then again 30 days later. You want to ensure that those non-respondents you target as they continue to fall off get targeted enough times that it exhausts the survey flow or that they have a genuine opportunity at three different times to answer the survey. It is important to balance being non-annoying and un-intrusive with being persistent while respecting your users.

If done right, this is an effective way to boost your response rate without offending your users. Most software allows you to set this up to trigger the survey to run after a specific period of time passes. Make sure you have a follow-up plan in place.

Better incentives

Another critical aspect to consider is whether users WANT to give you feedback. Some will be incentivized to do that because they want to improve the product—they are power users. Other folks might need a little bit more of a nudge. We're all familiar with those little surveys where you can get a $5 gift card for Starbucks or Target for responding. I'm usually more incentivized to do that myself because it's a small but meaningful reward!

Another way to do this at a lower cost while still somewhat boosting response rates is to run a sweepstakes lottery. So folks give feedback, and they're entered into a sweepstakes for a significant prize. It cumulatively costs less than individual rewards but still offers an incentive attractive enough to boost folks’ response rate and reduce some of that nonresponse bias. Just be careful to mon.

How we build better surveys and reduce nonresponse bias with Command AI

I’ve seen our Command AI customers deploy our tools in several ways to increase survey responses and reduce nonresponse bias.

One of the most significant ways is by creating a more personalized customer experience throughout the entire product.

If you consider survey software that allows you to send out broad surveys and collect data, you might be able to do a good job.

However, you can create a much more personalized survey when your copy, timing, and targeting are based on actual user intent.

This can be done through pretty standard things like sending a survey after a certain period of time, but it can also be done after specific actions, like a rage click, where folks click around the screen in anger.

These micro surveys, which allow you to get really quick, hyper-actionable feedback, are really effective in reducing nonresponse bias. This reduces the effort that the user needs to put into giving you feedback to almost zero. This will not work for a longer-form survey that requires text input and honest thought. Still, if you’re out to get a a 1 to a 5, a 1 to 10, a happy face or a sad face response, running a quick microsurvey with Command AI is a great way not to overwhelm your users and ensure they get you that valuable insight.

You don’t want to deal with survey fatigue and all the pop-up fatigue that so many of us have.

The other powerful example is for folks using Copilot, our AI agent. Users ask questions in natural language and get quick answers, and can also be launched into in-app product experiences. When you have a user in your product who runs into a specific issue, you can actually trigger a survey or an experience from Copilot into the product. This allows you to blend the end-product UX with your help or feedback system.

Altogether, concise and targeted surveys mixed with higher user intent knowledge can help you reduce nonresponse bias and drive better survey results.